Modern chemical experiments are costly and multidimensional. While many teams have traditionally relied on experienced intuition and iterative testing, these methods are best suited for narrow, low-dimension questions. They inevitably break down when faced with the complex interdependencies of a multi-variable system. In today’s high-dimensional landscapes, we have moved beyond the reach of intuition and can no longer rely on it to navigate modern chemical complexity.

In this guide, we unpack how chemists can evolve from old-school planning to an AI-augmented workflow, using tools like Atinary’s SDLabs to tie it all together.

Key takeaways of this blog

- The Multi-Variable Trap: Trial-and-error misses non-linear interactions and burns resources.

- DoE vs. AI-DoE: DoE provides structure; AI-DoE adds real-time adaptation by learning after every run.

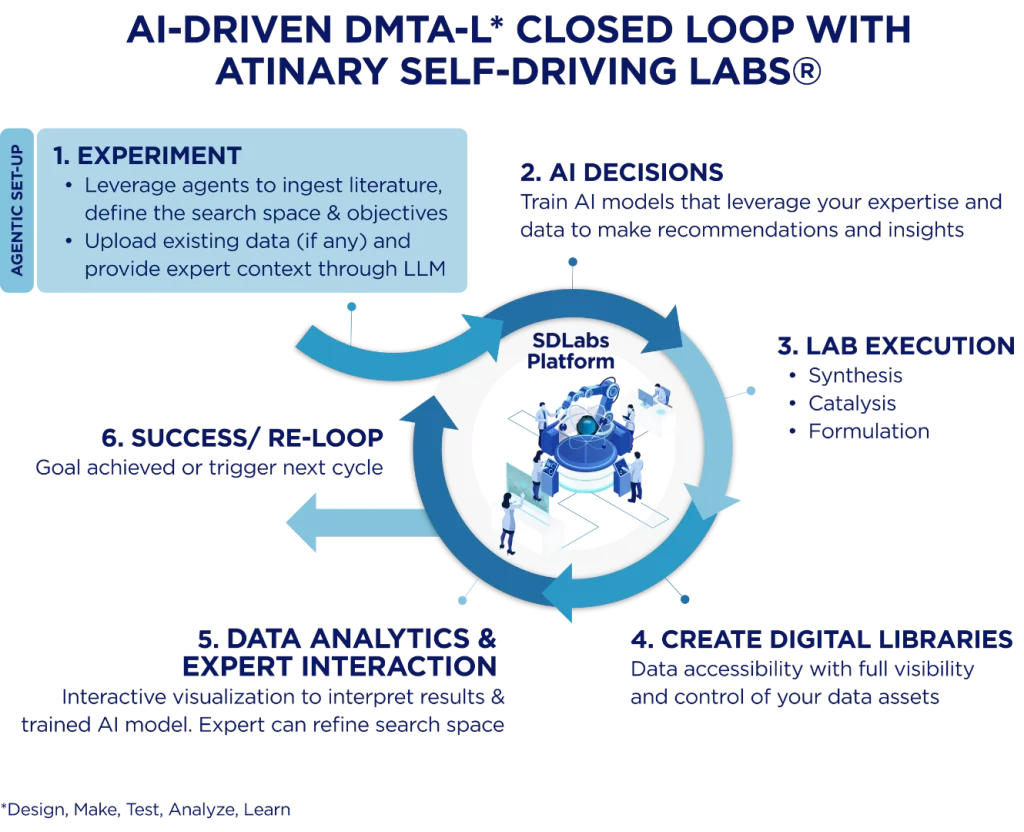

- Closed-Loop Advantage: The biggest efficiency gains come from the cycle: Seamless Experiment Setup → AI Decisions → Results → Model Update → Next Experiment until success is reached.

- Augmentation, Not Replacement: AI-DoE reduces noise and bias, freeing chemists to focus on high-level strategy and safety.

Main takeaway of blog

In Traditional DoE, the goal is Characterization. You want a high-fidelity map of the entire neighborhood so you understand how every variable affects the outcome. In AI-DoE, the goal is Discovery. The priority shifts from exhaustive mapping to efficiency, intelligently navigating the landscape to converge on the ‘peak’ with minimal trials.

Classical DoE

Before we look at the role of AI in experiment design, let’s revisit the foundation and workhorse of DoE. The systematic planning of DoE allows us to map the effects of controllable factors and their interactions with a minimal number of runs. The benefits of DoE are well-known: it uncovers both individual factors and their interactions in a minimal number of runs, and it builds statistical models that predict performance within the tested region.

DoE step-by-step

A typical DoE begins by defining the objective and variables.

- Define the Space: Identify your objective (e.g., maximizing yield) and variables (catalyst, temperature, concentration).

- Select the Design: Use a Factorial design for screening or Response Surface Methodology (RSM) for fine-tuning the “hill” of yield.

- Execute & Randomize: Run the experiments to avoid bias and record the responses carefully.

Analyze the Model: Use ANOVA to quantify which factors actually drive the chemistry.

What Is AI-DoE and how DoEs it work?

AI-DoE refers to closed-loop optimization. Instead of fixing a set of runs in advance, AI-DoE uses a machine-learning model to continuously learn from data and suggest the next experiments.

How the Cycle Works

In a typical cycle, an initial set of experiments is run (chosen by some simple scheme, e.g. random or space-filling). The results train a surrogate model (often a Gaussian process) that predicts the objective (yield, conversion, etc.) across the untested space and quantifies uncertainty at each point. An acquisition function then balances:

- Exploration: Probing uncertain regions where the model is unsure and needs more data.

- Exploitation: Targeting promising regions where the model predicts high performance.

The next experiment is chosen by maximizing this acquisition function, effectively seeking the condition expected to yield the most new information or improvement.

Importantly, Bayesian Optimization (BO) is designed to be sample-efficient. By explicitly modeling uncertainty and prioritizing informative samples, BO finds the optima in far fewer trials than exhaustive or grid searches. See video below.

Video description: This surface plot illustrates how AI-DoE navigates a complex design space in real-time. The ML optimizer interprets data at every iteration to make predictions (gray squares) and suggest the next optimal conditions. The scientist records current observations (purple stars), which the algorithm integrates with past observations (blue stars) to identify the current best result (red plus sign). By balancing exploration with exploitation, this iterative process identifies optima orders of magnitude faster than traditional methods, thereby reducing the search space from thousands or even millions of combinations to a few dozen high-value experiments.

With modern software, chemists don’t have to code these models from scratch. With graphical AI-DoE tools, users define variables and constraints via dropdowns or by importing an experiment from an electronic lab notebook (ELN), and then instantly receive suggestions for their next experimental conditions. Moreover, these tools provide a clear window into the AI’s decision-making. Users can view 2D slices of the predicted response surface, where shaded zones illustrate the model’s uncertainty. This allows chemists to see exactly why the software is probing a new region or refining a promising result. This ‘human-friendly’ approach ensures that BO remains a ‘powerful tool for optimization’ without the need for advanced statistical training.

In summary, AI-DoE is an adaptive experiment loop. At each step it fits a probabilistic model to all past data, then picks new runs to learn most effectively. The key is balancing exploration vs. exploitation. This approach excels when experiments are expensive, dimensions are many, or the objective landscape is complex. By the end of a BO campaign, we typically have a model of the response and near-optimal conditions all discovered with far fewer trials.

Why you need AI-Driven DoE when traditional DoE is not sufficient

While traditional DoE remains a foundational tool for mapping well-understood spaces, modern chemical development often involves variables that defy simple grid-based approaches. The choice between traditional methods and AI-DoE is a balance of resource scarcity, system complexity, and statistical overhead. Historically, successful DoE required a “Design Expert” with deep statistical training to avoid pitfalls like confounded variables. AI-DoE shifts this burden, using intuitive interfaces to put the power of advanced optimization directly into the hands of the bench scientist.

| Feature | Use Traditional DoE When… | Use AI-DoE (BO) When… |

| Problem Scale | Low-Dimensional Problems: 3-5 key factors. | High-Dimensional Complexity: 6+ complex, interacting factors. |

| Sample Cost | Abundant/Cheap: High-throughput runs are fast and inexpensive. | Precious/Costly Samples: Each trial takes days or uses expensive precursors. |

| User Expertise | Statistically Intensive: Requires a trained expert to design and interpret the matrix. | Domain-Driven: Accessible to chemists; the AI handles the underlying math. |

| Constraints | Simple: Factors have independent, linear bounds. | Complex: You have “If/Then” rules or chemical compatibility constraints. |

| Primary Goal | Mapping: You want to visualize the entire landscape (Surface Response). | Optimization: You want to find the “Global Best” in the fewest steps. |

| Design Style | Static: A fixed plan is made upfront and rarely changes. | Adaptive: The model pivots and learns after every single vial is moved. |

Putting AI-DoE into practice

Implementing AI-DoE transforms the laboratory into a continuous learning machine. This shift moves the focus from “trial-and-error” to a more front-loaded digital strategy, allowing scientists to navigate complexity and innovate with speed.

Whether doing manual bench work or using a high-throughput robotic platform, the process scales seamlessly in an AI-driven Design-Make-Test-Analyze andLearn (DMTA-L) closed loop manner.

The Closed-Loop Cycle in the Lab

- Experiment (Agentic Set-Up): Leverage agents to ingest literature and define the search space and objectives. Scientists define the search space upfront by listing factors (e.g., pH, loading, temperature) and their bounds, allowing the system to select an initial “seed set” to map the space.

- AI Decisions: Train AI models that leverage human expertise and data to make intelligent recommendations. After each batch, the platform suggests the next optimal conditions (e.g. 78°C at 10 mol% catalyst) accompanied by a confidence or uncertainty estimate.

- Lab Execution: The suggested experiments are carried out in the lab, covering areas such as synthesis, catalysis, and formulation.

- Create Digital Libraries: Ensure data accessibility with full visibility and control over all data assets generated during execution.

- Data Analytics & Expert Interaction: As results flow back via CSV or API, the model updates instantly. Utilize interactive visualizations to interpret results; if the chemical landscape is “bumpy” or has multiple peaks (e.g., bimodal behavior with different catalysts), the AI systematically explores those possibilities until it hones in on the peak.

- Success / Re-Loop: The cycle continues until objectives are achieved or a new cycle is triggered to further refine results.

AI-DoE in Action: Case studies

Theoretical efficiency is one thing; lab-proven performance is another.

ETH Zurich: AI-Driven Catalyst Development for the Conversion of CO2 into Renewable Fuel

In tackling a global economic and sustainability challenge, scientists from ETH Zurich SwissCat+ utilized Atinary’s SDLabs AI Platform to optimize a catalyst formulation for CO2-to-methanol conversion. This high-dimensional problem involved 11 parameters and over 20 million possible combinations, a landscape far too vast for traditional trial-and-error. By synthesizing and testing only 144 catalysts, occupying only 0.00072% of the design space, SDLabs delivered a 1,000x acceleration, replicating 100 years of traditional catalysis R&D in just six weeks. The use of Bayesian Optimization through SDLabs navigated this complexity by simultaneously optimizing across four distinct objectives:

- Maximizing CO2 conversion

- Maximizing methanol selectivity

- Minimizing CH4 selectivity

- Minimizing catalyst cost

Optimized Sustainable Hydroformylation and Rhodium Catalysts

Many daily essentials, from detergents and fragrances to pharmaceuticals, rely on the industrial chemical reaction, hydroformylation. However, this process typically relies on Rhodium, which are among the scarcest and most expensive metals on Earth. For process chemists, maximizing efficiency while minimizing Rhodium loading requires navigating a complex 7D search space where temperature, pressure, and catalyst concentrations interact in non-linear ways.

In a jointly published study, the researchers utilized AI-DoE and found optimal reaction conditions by iteration 14 of a 22-iteration campaign. Running in batches of four, the closed-loop approach successfully reduced reaction time and total cost, proving that AI-driven insights can reconcile economic constraints with high-performance chemistry.

Limitations and best practices

Data Quality over Quantity

“Garbage in, garbage out” remains the golden rule. If the measured response is noisy or the process drifts (e.g. equipment calibration changes), the model can be misled. It’s crucial to incorporate constraints and domain knowledge explicitly. For instance, if a certain solvent causes safety issues at high temperature, that limit should be encoded so the optimizer doesn’t waste runs on it. High-fidelity data is the fuel for BO; AI-DOE accelerates learning only when the experimental results are accurate and reproducible.

Interpretability and trust

Traditional BO can sometimes seem like a “black box,” if suggestions are followed blindly. Good practice is to regularly verify the model’s calibration: look at its uncertainty in the predictions and ask whether they make sense. Many platforms visualize both the predicted response and the confidence interval at the suggested point, helping chemists verify that new experiments are truly informative.

Human-in-the-loop strategic steering

While traditional BO lacks the flexibility for human intervention, tools like Atinary’s SDLabs is built for a strategic partnership. The most effective chemistry AI workflows shouldn’t just ask a scientist to “check” a result, but allow the expert to steer the discovery engine, bringing together domain expertise, intuition, with AI-generated insights to navigate complex chemical space.

Here are some practical guardrails and tips:

- Steering with Intuition: Scientists do more than review suggestions. You provide your intuition at every step, seeding the model with specific results from a key paper or steering the search toward the most chemically sensible regions.

- Interpreting with Context: As the model evolves, it generates a probabilistic map of your chemical space. Your role is to interpret these insights, reconciling the AI’s data-driven insights and patterns with your own domain expertise to decide what to try next.

- The Strategic Layer: The scientist remains the ultimate navigator. By providing the strategic layer (defining goals, setting constraints, and interpreting results) you mitigate the risk of the model pursuing statistically valid but chemically impractical paths, while ensuring every step is safe, feasible, and high-value.

- Agility at the Bench: A closed-loop system must remain open to human intervention. Atinary’s SDLabs platform ensures the scientist is always available to step in, adjust parameters on the fly, and pivot the strategy as real-time observations emerge at the bench.

Closing Statement

The transition from traditional, intuition-based planning to AI-DoE marks a fundamental shift in our research velocity. By integrating the statistical structure of classical methods with the adaptive power of Bayesian Optimization, AI-DoE transforms the laboratory into a high-throughput discovery and innovation engine. It doesn’t just find a better result; it finds the best result with a fraction of the traditional resource investment.

In this reimagined workflow, the scientist is providing the strategic layer: defining the mission, seeding the model with domain expertise, intuition, and published results, and steering the search based on real-time observations at the bench. This partnership allows you to reconcile experience and known literature with new, AI-generated insights, moving from isolated wins toward breakthroughs that answer global challenges.

See the data behind the breakthroughs and discover how Atinary is augmenting scientists with AI and robotics to accelerate discovery and innovation and unlock limitless science for the global challenges in health and a sustainable future.

If you’d like to explore whether this fits your lab, book a meeting with us and we’ll assess it together.

This post was developed with contributions from Dr. Mohammed Azzouzi (Applications Engineer) and Lucien Brey (ML Scientist) at Atinary.